“A superhero in a black and gray costume, with a cape and a mask, stands on top of a tall building at night. The costume has a bat-like symbol on the c…”

Context if you need it:

- Midjourney, DALL-E via ChatGPT, and Stable Diffusion* all exist and can make images of Batman that seem to obviously be inspired by copyright material that the models have been trained on.

- The NYTimes has sued OpenAI, and multiple other lawsuits exist between copyright holders and the model makers, alleging that there is copyright infringement under various legal theories.

- Note: this post was written before OpenAI released their demo of Sora, which will warrant it’s own consideration of how close we are to some of the AI movie creation I note late in the post.

I love Midjourney, and Stable Diffusion (more into Automattic than Comfy). I have hundreds of hours in both. The first time you use a string of words to instantly generate an image that captures the essence of your meaning, often in a quirky or funny or surprisingly philosophical way, is a magic moment akin to that of the first swipe on an iphone, the first reply in a chat room – experiences with technology that happen once every decade, and undeniably change your view of what will be possible in the world forevermore.

AI is creating immediately copyright tension, like the internet before it. A very short version of this analysis would be to simply predict that the exact same scenario will play out with generative AI models, and indeed I think it is likely. Google and other web crawling sites were spared from any serious regulation in the US, and in fact Google won a somewhat precedent-setting case against booksellers that allowed them to scan copies of entire libraries so long as they weren’t reproducing the text for free (quite relevant to the analysis in the case of LLMs). In other venues Google either voluntarily or by regulation agreed to pay certain large publishers for the ability to show links to their content in searches. We live in a capitalist society and new technology is often an incredible aggregator of wealth, quickly and suddenly, away from old industries. Google killed many smaller business models, particularly those around content, and the larger ones had to cut deals to stay afloat. Laws were not suited to protect the old models, and there was little capitalist incentive or political will to correct it, so the old models suffered at the expense of the new. Likely the same will happen with generative AI, as OpenAI has quickly become one of the most valuable private companies on the planet. Congress’s ability to pass a law regulating technology is very questionable.

But let’s pretend that outcome is not inevitable, and that the United States will need to develop a framework for understanding the impact of Generative AI on creative work, and how to modify copyright law (if it should).

Copyright law and whats different with Generative AI

Copyright law has a basis in the Constitution, which says Congress can give authors rights to their work for a limited time. Many also assert a moral basis (which is really a capitalist basis) that an author deserves compensation for their work. Form that sprang our current copyright laws, which date to a law passed in 1976, and have held up surprisingly well despite the dramatic transformation of creation and distribution of content that has transpired in the ensuing 50 years.

I won’t do a comprehensive analysis of every element of copyright law as it applies to AI. The main surface areas of debate center on a few specifically:

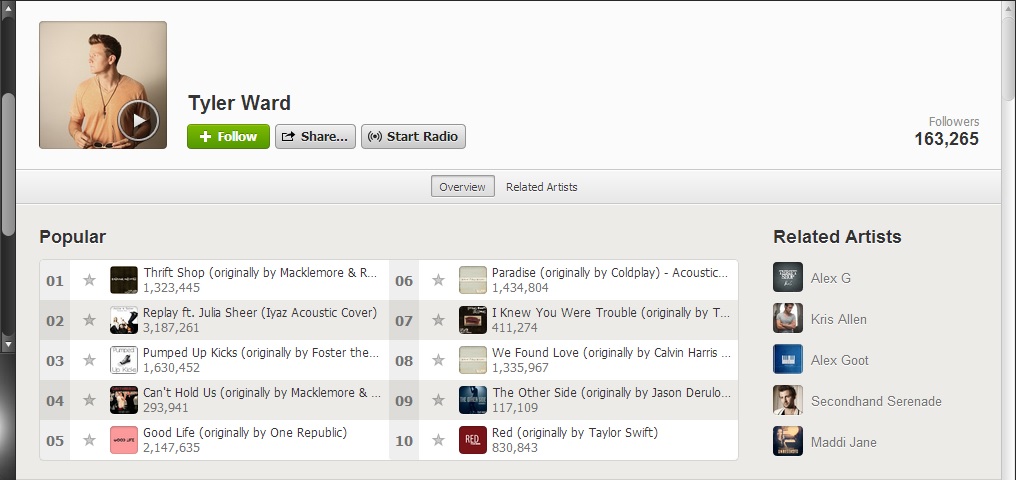

- Originality: A work must be original to be covered by copyright. Certainly the works ingested by LLMs are copyrighted. But what about the output? Is the cover image at the top of this post original? I told chatGPT to make an image of a batman like character. It’s certainly unique in the sense that I would image it’s the only one EXACTLY like it in the world. If I had drawn it by hand it would qualify as original (though there would be other issues), if I had used Adobe Photoshop to create it it would still qualify. More on this later.

- Fair use: There’s a phrase in copyright law called transformation, which is something like a get out of jail card. Copyright law wants to make it clear that criticism, parody, etc are protected given our strong bias to enable First Amendment speech. So copyright law grants perhaps incredible leniency to behavior that would otherwise be considered infringing. This is a red herring in my mind; the fact that copyright materials are in some way processed by the algorithm before some ultimate end material is produced is essentially irrelevant. The algorithm itself has no intent, so it can’t be transforming a work for the sake of parody or critique. It matters far more what a user of a model does with the output, which is a case-by-case determination so unlikely to ever be the basis for a broad ruling on generative AI models and copyright.

- Nothing else: Every other element is basically irrelevant as applied to the models themselves. This isn’t to say that there aren’t interesting questions related to the output of the models, but those decisions are unlikely to be the basis for a broad ruling about whether the models themselves violate copyright law as the cases against OpenAI and others allege.

How will current Copyright law play out with regards to the Generative models?

The NYTimes suit relies entirely on the premise that since GPT models are trained on a dataset which includes copyrighted NYT material, and it can reproduce something sort of like that material when prompted correctly, then it must be infringing. There’s more than 60 pages in the complaint, with lots of flowery language about the value of the news and how great the NYT is (I love the NYT and am a longtime subscriber). But all of that is going to be irrelevant to the legal question, which the court will do it’s best to analogize to the internet indexing of websites, and in general this argument is not very strong for the NYTimes. If it’s OK for google to index and reproduce the EXACT text of NYTimes articles with attribution, why would it be not OK for OpenAI to index and reproduce the INEXACT text of NYTimes articles with attribution? The fact that what LLMs produce is not exactly the copyrighted material, certain in image generators, and mostly in text generators, is pretty relevant. This is why it’s highly likely to me that the NYTimes suit will fail. LLMs may ingest copyrighted works in their training, but they output something different, so they aren’t actually reproducing the copyrighted work anyway.

And every other fact that OpenAI and other LLMs will present is pretty strong for them. It’s not exactly legally relevant (though the NYtimes complaint spends a lot of time on it anyway), but trademark has a concept called confusion regarding whether consumers would believe an infringing product is actually being sold by the original trademark holder or not. There’s NO chance anybody is going to ChatGPT asking for pictures of Batman and thinking that it is an official DC product. There’s similarly no chance that the people prompting ChatGPT to produce substantially similar text to NYTimes articles are confused that the information is not from the NYTimes. If copyright law is intended to protect a creator’s ability to profit from their original work, it seems very unlikely that an LLM is currently a substitute in any way for going to the NYTimes for news, or to a comic book store to buy a Batman comic.

What happens when Generative AI is a lot better?

The internet was primarily a distribution innovation as it regards copyright infringement – Napster was bad for record labels because it created unlimited and unrestricted access to song files. I can google the name of any artist and see their art, and make a copy of the file, and I sort of have the image now. You can email somebody a pdf of a comic book (if you have one). Congress passed the DMCA to make sure that access to copyrighted information wasn’t too broad with centralized providers, and largely that has struck the right balance, though the internet has still completely disrupted almost every form of media to varying degrees. Print media is essentially dead, the music industry has changed forever, etc. But it did not eliminate human creativity in the slightest, and arguably the internet greatly enhanced human creativity because the ability to distribute and access create works was democratized dramatically.

The words in the copyright act that the internet complicated were “reproduce” / “distribute”. It was not trivial to reproduce a song before the internet, at least not in a way that you could distribute it permanently to millions of people. Generative AI is not an access innovation, but an originality innovation. Indeed a big challenge for the cases currently made in court against Generative AI technologies is the fact that what they produce is not actually a copy, but something we have historically regarded as an original-enough reinterpretation. Generative AI ought to reframe our understanding of originality dramatically, both for written text and images, and soon enough for video as well. If you are willing to spend the money on the compute cost, you could conceivably assign a Stable Diffusion instance the task of creating an image for every possible super hero design, or every possible pokemon. You could ask an LLM to generate the first paragraph of every possible news story about the Middle East. LLMs have revealed something uncomfortable about human creativity; that it is more deterministic than we’d previously chosen to believe, and that the monkeys with infinite typewriters are here at our disposal right now.

Copyright law was intended to protect the creator of a work from it’s exploitation without receiving profit, and in some regard it does feel that Stable Diffusion, for example, is benefiting from the work of copyrighted material without giving any sort of compensation. Whether courts twist current law to conform is a somewhat irrelevant question because it seems inevitable that money will allocate correctly in due time to award the largest copyright holders with some modest compensation for the ability to train AIs on their works (ala Google indexing in the Web 1.0 era). This is a question of inputs rather than outputs.

On outputs, though, what to do with the reality that soon machines will be generating an infinite amount of content that likely outpaces and completely mimics, and thus replaces, much of the output of humans in the creative realm? The current stance of the copyright office that these works are not subject to copyright protection seems obvious to become obsolete quickly, either with pressure from the large corporations that produce much of the valuable copyrighted work in the world and who no doubt will use new technologies to make that work more efficiently and demand the capitalist system the operate under afford them the same protections for a script partially written by an AI as it does for one entirely written by humans. That iteration of the question seems, again, pretty obvious in it’s outcome, and for large studios, music publishers, news organizations, and the other major institutions that primarily benefit from copyright protections, not much will or ought to change. Whether The Avengers 12 is made partially with AI or not, Disney will find a way to ensure it is not freely available. Even if the budget for The Avengers 12 is reduced across the board with AI actors, AI voices, AI imagery, and an AI script, the cost to market and promote and distribute the movie will be plenty of incentive to ensure its creation also establishes a copyright protection.

The bigger challenge is 10 or 15 years beyond that, when I can simply ask ChatGPT to make Avengers 13 and show it to me. That sounds ridiculous now because inserted below is what I get currently when I ask ChatGPT for the same.

My contention here is that the image above is “original” in our current framing of copyright law, but also completely undeserving of any legal protection. If the output moved, and had mediocre dialogue, and a plot arc, and the villain lost in a startling comeback by the Iron Man character right as all appeared to be lost, would it be more original / more deserving? Disney / Marvel will likely make that movie and spend millions of dollars in all sorts of ways to promote it, and their efforts and investment in that regard deserve protection. But what if an AI COULD make not just 1 exact copy of that movie by complete chance, but also make, deterministically, every possible iteration of that movie if given the correct prompt and infinite chances to make it? On ChatGPT 12, when I ask for an Avengers movie, will that be as obviously unoriginal to us as the image above, and would it deserve copyright protection?

There strike me as being only one likely outcome, which is that nothing will change in copyright law at all. As long as copyright law protects output from AI models, then we are largely in the same place as we are today, and AI is just another tool in the toolbox of creative entities. Works subject to copyright have no intrinsic value other than what they are worth on the open market, and though AI tools will make it easy to flood the market with crap, the market will deem them worthless and they won’t be duplicated anyway. The best and most valuable works will be promoted, marketed, surfaced by social media, and otherwise invested in or chosen by the masses as having merit and exploiting them for profit will be similarly protected by copyright law.

The gap in this approach, though, is that it seems more likely than ever that media may evolve to be more adaptable to the preferences of an individual, and more valuable work over time will be co-created by the technology and the consumer. We call this “fan fiction” today, works made which generally blatantly violate copyright but have non-cannon plots and no distribution beyond tiny fervent communities. Fan fiction is largely ignored by the copyright holders because it tends to be non-conflicting with the main works, and suing your biggest fans is often a bad look. But what if a huge percentage of media was fan fiction? This is already starting to happen with entertainment like TikTok and Roblox, where the majority of the content is user generated using the tools at the disposal of the medium versus created / curated by large entities. It may not be far-fetched to imagine a future where the majority of content preferred by consumers is in some iteratively generated and modified by mass user input versus curated by teams of creatives.

In the internet days (eg 2001 -> roughly Nov 2022 when ChatGPT launched), copyright law did nothing, and though capitalism took over it did not save a key pillar of our society that was built on existing distribution models: journalism. There was a cambrian explosion of journalism models after the internet came of age and evolved, and essentially none of them worked for very long. Today you can find the nth iteration of techcrunch, and it is ironic in some respects that the NY Times is leading the way in suing generative AI companies, but ultimately the news lost the battle long ago when the business model that long sustained them (ads) found a more efficient home in the new technology that reduced their distribution costs to zero. It was not instantly that this was clear, it took many years and iterations of both social media and journalism on the internet to arrive at the conclusion, but ultimately the answer is that far more people get their “news” directly from social media than from any legitimate journalistic source today, and the social media companies earn a lot more from serving ads than the journalists do.

Now in the coming AI age (2022 -> ?), we will quickly face the problem in a different form. What key pillar of society suffers when I can ask GPT for Avengers 12 and get more than an ersatz replica of what Disney would produce? Much like google and facebook reproduced news until they choked the industry they siphoned from, I suspect the entertainment industry will be generally demolished by the coming innovations in AI; when you can get a custom version of the next episode of your favorite show, why defer to what a writer in Hollywood thinks you might like instead? I think the copyright implication of Generative AI technology in this generation will be that less and less of the content consumers enjoy comes from big publishers and producers, but from outputs of generative AI models in a 1-of-millions format, and is therefor not going to be covered by copyright. But copyright holders will have secured some fee on inputs into training models, and it’s likely you’ll be generating your custom Avengers 13 movie in a Disney owned and operated LLM anyway. And like the print media in the age of the internet, many of those copyright holders with whither away as the majority of the revenue in the industry moves from the creators of the IP to the technological innovators that control the most powerful LLMs.

You must be logged in to post a comment.