Back in early 2024 I wrote about Generative AI in images. It was a young and naive time in image generation; there was, essentially, no way then to generate a video on consumer hardware, and as I was writing that article OpenAI’s first version of Sora was previewed to much fanfare. It was subsequently released to much disappointment. In 2025 it would be re-released to immense fanfare, and this week OpenAI announced they will be killing it entirely. This feels an apt moment to revisit the landscape I observed in that article.

In 2024 my core view was that the law was likely to continue being decided, at least in the near term, in favor of the model makers. Copyright law has in recent history helped incumbents fight against distribution innovations (eg the internet), but Generative AI is not a distribution innovation. Rather, it’s an originality innovation, as it can produce essentially free, infinite variations of material that would be copyrightable IF it were manmade, and IF it were not covered by things like fair use. Indeed in the legal battles discussed in my first consideration of this topic, the law has settled in favor of the model makers. Generative AI models are not reproducing copyrighted material, and training models on copyrighted material has not (yet) been deemed any sort of legal violation. What I was wrong about, however, is that the copyright and trademark office has continued to find that the works are also not copyrightable at all – but again, this helps the model makers, because their innovations are not generating copyrightable work!

The real change since 2024 is that the models have gotten SO MUCH BETTER. It seemed clear in 2024 that eventually people would prompt their way to Avengers 13. While Sora and Seedance 2 aren’t quite doing entire movies, certainly the meme that “hollywood is dead” is repeated in comments below the most impressive outputs from those models. It is now very easy to imagine a future where entire movies could be convincingly prompted to life, and that future could be 2027. The videos produced by those models are already convincing as ersatz productions of a major studio (see above example). And, as the regulatory regime of the intervening years has made clear, there is nothing, strictly speaking, from stopping them from releasing these models into the wild. Yet, Sora is shutting down today. And Seedance 2.0, despite being a model made by a Chinese company (not a regulatory regime known for it’s respect of US copyright laws) is self-censoring it’s model, forestalling it’s release due to it’s perceived proficiency in producing copyrighted material.

IF the model makers were constrained by the law, there would be little to discuss. But instead, they are self-censoring. Why? I think there are a few possible explanations.

One possible explanation is ethics. It might be the case that the model makers worry the impact of unbridled Avengers generation, even if legal, could wreak havoc on Hollywood and related industries, and they feel bad! However, the foundation model labs have not shown a particular concern for perceived unethical behavior in other critical realms. OpenAI and Anthropic were most recently in the news cycle for agreeing to help the government surveil it’s own civilians and assist in the commission of war crimes (at least contractually), despite some public display of remorse about being forced to do so begrudgingly. Elsewhere, chatbots are causing teenage suicide, and the CEOs of these companies are loudly pronouncing the American worker obsolete. Grok, perhaps the least internally restrained of the companies building their own models in America, has been sued in multiple geographies for helping users undress women of all ages. It seems far-fetched to think that HERE, where AI meets Mickey Mouse (not Steamboat Willie but REAL American Corporate Mickey), is the point at which companies have found some ethical north star.

Another possible explanation is that the current legal regime is rather unstable, and the companies believe releasing these models in their unconstrained form would in and of itself spur a change in the law. Since I’ve written and thought about the legal regimes that govern these models I’m tempted to overweight this explanation. Arguably there IS something surface-level illegal-feeling about generating an IP-laden video without permission when it’s quality is so close to what the IP holder themselves would produce, even if the tools used to produce it are not violating copyright laws. And perhaps because we do have an entire legal regime that feels like it should apply, both consumers and the model makers sense that a full release of these models would shift the law quickly to correct the gap that permits them, much like our laws have changed (…slowly) in the past to account for new technology.

Another explanation is that they fear consumer backlash. People may be willing to tolerate, these companies could think, AI as a productivity tool, of a war aid to our government, and apparently a companion and a replacement in the workplace. But a system that too obviously and too directly allows the user to generate something like “a new Marvel movie, in the style of Marvel, with Marvel characters, but better tuned to my preferences” is a bridge too far. That logic doesn’t totally hold for me either as my framing might suggest. Entertainment is, on the scale of human need, rather low compared to other things AI is replacing.

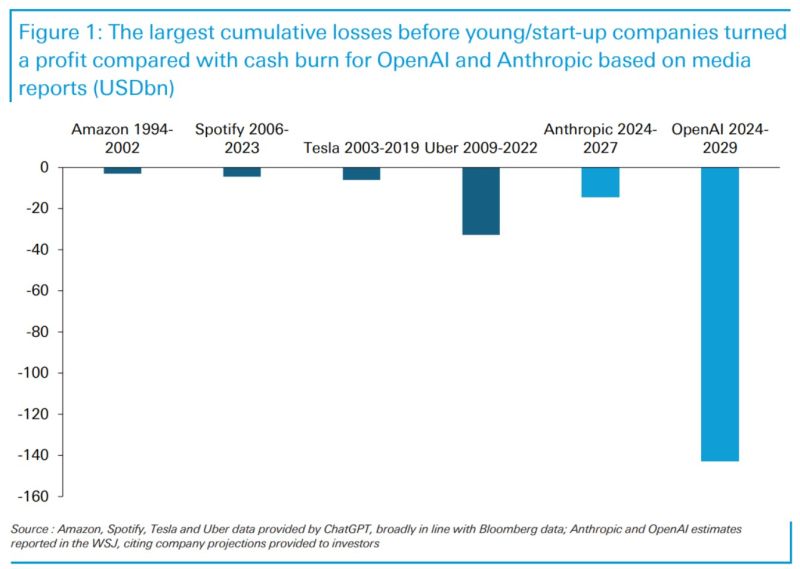

The most likely explanation though is business model related – It may just be that relative to other bets OpenAI can make, there’s less path to profit in generative AI. The inference cost for video is enormous, and no business model has yet emerged to monetize these generations for the model makers beyond consumer subscriptions. Based on the little we know of OpenAI, the unit economics are currently somewhere between “pretty bad” and “the worst unit economics of any technology company to ever exist”.

OpenAI also seemed quite far from figuring out a business model to serve Sora at all. Sora was as successful at showing off the technical chops of their video model as it was in highlighting that OpenAI has no idea how to build and monetize a consumer mobile app. Sora was absolutely the least impressive onboarding experience I’ve ever had for a product by a company as high profile as OAI. They also have no social graph of their users, unlike Meta or Google, and so they have no idea how to serve a user content they might find interesting. They also kept a tight funnel on signups throughout the apps short life, meaning that I never found myself with more than a handful of real life friends using the app. They also never iterated in any way on the UI; I am no mobile app genius, but it doesn’t take much than a cursory glance at any other consumer AI app (Glif, Grok, Higgsfield, etc) to see that Sora ought to have had pre-baked prompts and better discovery tools. Given the absolute vacuum of talent that OpenAI has been, it’s actually shocking that they didn’t bother to hire even one person who could improve the Sora experience. There was also no attempted monetization in the app of any kind, ever. Despite OAI signing a billion dollar licensing deal with Disney at the same time they released Sora 2, there was never any evidence of that partnership in Sora itself. For the resources at their disposal, it’s hard to grade the execution of the OpenAI team on Sora as anything but an F. Ultimately it felt more like a tech demo. Other post-mortems on Sora have suggested that people simply don’t want to be that creative, but I think this is completely wrong. MidJourney is still a huge success today, as are companies like Glif, and a cottage industry of open source image generation users. OpenAI had the best model by far, but completely failed to package it in an approachable and useable UI.

So this leaves us with an interesting question – why isn’t anybody building a great generative video product using the most advanced models? The legal / regulatory regime isn’t in the way. The ethical concerns seem minimal compared to other areas where model makers have confronted questions of ethics (and barreled forward). And while business model / unit economic concerns are “real”, they are also real in every other LLM product and the negative margin has not stalled development. Arguably releasing a great generative video product would differentiate at least one of the model companies (Anthropic does not even have an image model).

My guess is that, by this time next year, a company we might have one time referred to as “a wrapper” on Seedance’s API will take off, and risk the creator backlash by serving a video model on a far better consumer UI than Sora. The economics of serving these models on an inference basis can only get better. There is simply too much money to be [made / raised] with a viral AI hit right now for the above listed concerns to stop some founders from trying. Only then will we find out whether copyright law can be resurrected, or if consumer ethics could be a real blockade, to prompting our way to bad versions of Marvel movies.

You must be logged in to post a comment.